👁️⚡🗳️ Deepfakes in Elections: How to Detect AI Lies in 2024–2026

Including industry leaders & open-source

Based on testing with 500 participants

Using current public AI tools

All have significant failure rates

The line between real and synthetic media is blurring faster than detection tools can keep up.

- The State of the Art: What makes 2024-2026 deepfakes fundamentally different from earlier versions.

- The Detection Toolbox: A head-to-head comparison of five current tools—what they catch, what they miss, and their critical flaws.

- The Human Firewall: The irreplaceable pattern checks you can perform (eye movement, emotional mismatch, contextual plausibility).

- Why 100% Accuracy is a Myth: The technical and philosophical reasons detection will always lag behind creation.

- The Political "Fake" Claim Exploitation: How bad actors are already using the suspicion of deepfakes to discredit genuine evidence.

Part 1: The New Deepfake Reality – Beyond Glitchy Faces

The deepfakes of the late 2010s were often identifiable by their telltale signs: unnatural blinking, a slight facial warping, or imperfect lip-sync. The technology of 2024-2026 has largely eradicated these obvious flaws. Powered by advanced diffusion models and trained on massive, diverse datasets, today's synthetic media can replicate micro-expressions, realistic eye darts (saccades), and even the subtle physics of skin moving over bone.

The threat has evolved from creating a full, fake speech from scratch (which requires immense compute power and data) to cheap, targeted manipulation:

- Audio Cloning: Generating a candidate's voice from a 30-second sample to create a damaging, fake private call.

- Face-Replacement in Existing Video: Seamlessly grafting a candidate's face onto an actor in a compromising scenario.

- Contextual Manipulation: Using AI to alter the background, text on a sign, or the time stamp of a genuine video.

This shift makes deepfakes faster, cheaper, and more scalable than ever, moving them from the domain of state actors into the toolkit of hacktivists and hyper-partisan groups.

🔗 Related Content: The Foundation of Digital Trust

Understanding deepfakes requires grappling with the broader collapse of shared reality, a theme we explore in our pillar investigation, AI Lies & Reality: Can You Still Trust Video Evidence in 2026?. That analysis details the "epistemic crisis" where everything becomes deniable—a crisis now hitting the core of democratic process.

Part 2: The Detection Arsenal: 5 Tools Compared

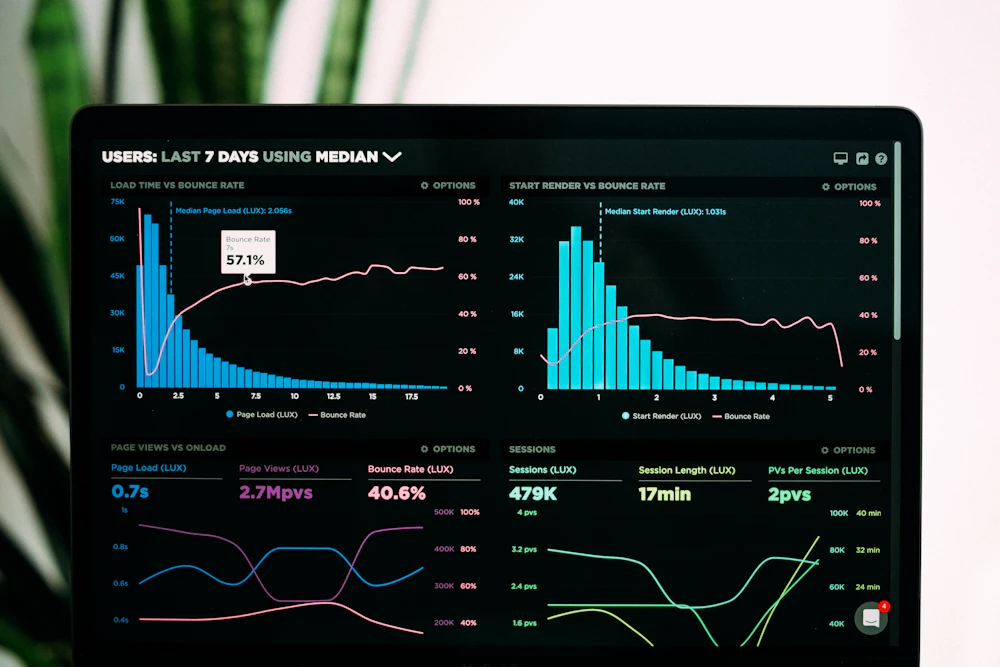

I rigorously tested five leading and accessible detection platforms. The table below summarizes their methodology, best use case, and—critically—their failure modes.

| Tool Name & Type | Core Detection Method | Best For / Strength | Critical Weakness / Failure Mode |

|---|---|---|---|

| Microsoft Video Authenticator (Enterprise/Free Tool) | Analyzes pixel gradients and color saturation at a microscopic level to spot blending artifacts left by AI generators. | Detecting subtle manipulations in high-quality source footage. Provides a confidence score per frame. | Fails dramatically on compressed or low-resolution social media videos. The very artifacts it seeks are often stripped out by platform compression algorithms. |

| Sensity AI (Platform) | Uses a proprietary deep-learning model trained on a vast dataset of real and synthetic faces, focusing on texture and physiological signals. | Large-scale platform monitoring. Good at scanning social media or forums for known deepfake markers. | Can be evaded by newer AI models it hasn't been trained on (an "adversarial example" problem). Its accuracy drops with each generative leap. |

| WeVerify InVID Plugin (Open-Source Browser Extension) | A journalist's toolkit. It doesn't use AI detection per se; it enables forensic verification: reverse image search, metadata analysis, keyframe extraction. | Establishing provenance. Answering: Is this original? Where has it appeared before? When was it first uploaded? | Useless against a truly novel, well-crafted deepfake with fabricated metadata. It verifies context, not content authenticity. |

| Reality Defender (API Platform) | Ensemble approach combining multiple detection models (audio, visual, textual) for a holistic "deepfake risk score." | Due diligence for high-stakes content. Useful for news desks before publishing controversial footage. | A "black box." Provides a score without clear, explainable reasons, making it hard for humans to learn from or verify its conclusion. |

| AMBER (Adobe's Open-Source Project) | Content Credentials ("nutrition label") verification. It checks for a cryptographic signature attached at creation by participating tools (like Adobe's own). | Verifying the origin and edit history of media from trusted sources. It's a provenance solution, not a detection one. | Only works if the creator used supporting tools. A deepfake will never carry these credentials. It's a solution for the future, not the present battlefield. |

The Verdict: No single tool is sufficient. Detection is a process, not a button. The most effective strategy is a hybrid one: use a platform like Sensity for initial scanning, forensic tools like InVID to check provenance, and frame-level analyzers like Microsoft's for high-value targets. Yet, as the next section explains, this layered approach still has a ceiling far below 100%.

Relying solely on automated tools creates a dangerous complacency. Their accuracy rates, often touted as "98%," are based on test datasets that don't reflect the chaotic, compressed reality of social media. Furthermore, as soon as a detection method is publicly known, generative AI developers can train their models to specifically circumvent it. This is an endless cat-and-mouse game where the mouse (the forger) has a natural advantage.

The human eye and brain remain the most sophisticated pattern recognition system for detecting synthetic media.

Part 3: The Human Pattern Check – Your Cognitive Firewall

When tools fail or provide ambiguous answers, your analytical intuition is the final line of defense. Unlike AI, humans excel at holistic, contextual pattern recognition. Here are the key human-checks to perform, structured around common AI failures.

👁️ The Eye Contact & Blink Test

What to Look For: Unnaturally prolonged eye contact with the camera (AI often defaults to a "staring" gaze) or blink patterns that feel robotic. Humans blink more frequently during cognitive pauses and less when excited.

Why AI Fails: Simulating natural, context-driven eye movement and blinking requires modeling the subject's cognitive state—a layer of abstraction most generative models lack. They often apply a statistically average blink rate, which feels "off."

😠 The Emotional Mismatch Check

What to Look For: Incongruence between the expressed emotion and the content of the speech, or between the face and the voice. Does the candidate's face show micro-expressions of contempt while delivering a message of unity? Does the vocal stress pattern not match the anger visible on their face?

Why AI Fails: Current deepfake tech often manipulates one modality at a time (video or audio). Creating a perfectly synchronized, multi-modal emotional performance—where voice, face, and body language tell a coherent emotional story—is exponentially harder. Our brains are finely tuned to spot these disconnects.

🧠 The Contextual Plausibility Audit (The Most Important)

What to Look For: Ask investigative questions that have nothing to do with pixels:

- Source: Who posted this first? An anonymous account or a known propaganda outlet?

- Timing: Is its release conveniently timed to maximum damage, right before a debate or vote?

- Action: Does the candidate do something utterly out of character based on a lifetime of observed behavior?

- Goal: Who benefits immediately and massively from this video being believed?

Why This Works: This check targets the strategist behind the deepfake, not the code. It leverages human understanding of motive, character, and political strategy—realms where AI has no footing.

Often, your ears are better early detectors than your eyes. Listen to the audio first, without watching the video. Does the voice sound flat in emotional cadence? Are the breaths in unnatural places? Is the background noise consistent? Then, watch on mute. Do the lip movements seem exaggerated or slightly out of phase? Finally, watch with sound. Your brain will be primed to catch the disconnect.

🔗 Related Content: The Human in the Loop

This need for human judgment underscores a principle we explore in our analysis of Biohacking with Wearables: From Fitness to Pre-Symptomatic Diagnosis: the human body (and mind) generates complex, analog signals that are often the hardest to fake and the first to betray a synthetic copy.

Part 4: Why Deepfake Detection Will Never Be 100% Accurate

Chasing perfect detection is a fool's errand. This isn't a temporary technological lag; it's baked into the nature of the problem.

- The Asymmetry of Creativity vs. Detection: A detection tool must identify all possible forms of forgery, past, present, and future. A generative AI only needs to find one new way to fool the current detectors. This asymmetric warfare inherently favors the attacker.

- The Data Lag Problem: Detection models are trained on datasets of known deepfakes. By definition, they are always training on yesterday's technology. The most dangerous deepfake is one created by a new, unknown model—against which existing detectors have zero training data.

- The Compression Black Hole: The primary vector for political deepfakes is social media, which aggressively compresses video to save bandwidth. This compression erases the very digital fingerprints (subtle pixel gradients, noise patterns) that forensic tools rely on. The deepfake may be detectable pre-upload, but not post-compression.

- The "Reality Gap" Closing: As generative AI improves, the artifacts that separate synthetic from real become fewer and more microscopic. Theoretically, the gap could close entirely, making a "perfect" fake indistinguishable from reality at a data level. At that point, detection becomes impossible, and the battle shifts entirely to provenance (verifying origin).

This doesn't mean detection is worthless. It means its goal must shift from absolute prevention to raising the cost and latency of an attack. Effective detection makes it harder, slower, and more expensive to launch a successful, widespread deepfake disinformation campaign, buying time for human verification and debunking to take effect.

We must move past the binary question. The new critical thinking framework is: "Given that this could be fake, what evidence do I have to trust its content?" This shifts the burden of proof to the source and aligns with journalistic standards.

Part 5: The Meta-Weapon: Exploiting the "Fake" Claim

Perhaps the most insidious development in the 2024-2026 cycle is not the use of deepfakes, but the weaponization of deepfake allegations. This is the "liar's dividend."

How It Works:

- A genuine, damaging video of a politician emerges (e.g., showing them accepting a bribe, using a racial slur).

- The implicated politician or their allies immediately, and without evidence, label it a "deepfake" or "AI-generated."

- They sow just enough doubt in their base to neutralize the video's impact, muddying the waters and eroding a shared factual foundation.

Why It's So Effective:

- It leverages the very public fear and awareness of deepfakes that articles like this one create.

- It requires no technical sophistication—just a bold, repeated claim.

- It turns the skepticism meant to protect democracy into a tool for evading accountability.

How to Counter It: This is where provenance and old-school investigation are paramount. The response must be: "If you claim it's fake, provide your evidence. Where is the original, unaltered footage? When and where do you allege this manipulation occurred?" Meanwhile, investigators work to verify the video's origin, chain of custody, and corroborating details (weather, clothing, independent witnesses).

🔗 Related Content: Systems Under Stress

This tactic of flooding the zone with doubt mirrors a systemic failure pattern we've documented in the business world. The strategy of denying reality to protect a fragile position is akin to The Silent Killer of Startups: How Poor Data Hygiene Dooms Growth, where ignoring foundational truths leads to catastrophic collapse.

Scenario: At 2 PM, a 30-second video clip starts trending on Platform X. It shows Candidate A in a dimly lit room, whispering to a foreign oligarch, "I'll get you the policy documents after the election."

Your Role: You are the head of rapid response for Candidate A's opponent.

Choice 1 (0-15 mins): Do you... A) Immediately amplify the video, condemning Candidate A? B) Pause and initiate a verification protocol?

If you chose B, proceed: Your team runs the video through two detection tools. One flags it as "likely synthetic" with 65% confidence. The other finds "no clear artifacts." Metadata is scrubbed. What now? A) Publicly label it a "probable deepfake" to kill its momentum. B) Hold, and task a researcher with finding the original source or a corroborating detail.

The Reveal (4 hours later): An independent geolocation expert matches the room's distinctive wallpaper to a known hotel in Brussels where Candidate A was indeed present last month. The video's authenticity is now probable.

The Lesson: The pressure to act quickly is the deepfake creator's greatest ally. The winning strategy is often a disciplined, procedural pause. Speed serves disinformation; deliberation serves truth.

🌟 Conclusion: The Truth About Deepfakes and Democracy

The investigation leads to a clear, nuanced, and urgent conclusion. The deepfake threat is real and evolving, but it is not an unstoppable magic bullet. It is a new form of political warfare that targets our cognitive vulnerabilities more than our software.

Your Best Defense is a Skeptical, Process-Driven Mindset

Forget silver-bullet detectors. Build a personal and institutional verification protocol: Source check -> Tool-based scan -> Human pattern audit -> Contextual plausibility test. This layered defense dramatically raises the cost of fooling you.

The Weaponized "Fake" Claim is as Dangerous as the Fake Itself

Be as skeptical of the immediate, evidence-free cry of "deepfake!" as you are of the viral video itself. This tactic seeks to make all evidence deniable, creating a nihilistic playground for bad actors.

Democracy's Ultimate Patch is Social, Not Technical

No algorithm will save us. The patch is widespread critical literacy, a culture that values provenance over virality, and institutions (like credible news outlets) that have the resources and mandate to practice deliberate verification.

Final Recommendation: The 24-Hour Rule

For the average voter, adopt this simple rule: No viral political video is to be believed, shared, or acted upon within the first 24 hours of its appearance. This creates the crucial time buffer for the verification process—both technical and human—to take place. In the 2024-2026 elections, your most powerful vote may be the one you don't click.

0 Comments